Master Data Management vs Data Integration: What’s Changed Since 2021

.jpg)

Data mastery and intelligent workflows have the strongest impact on efficiency, revenue growth, service quality, and customer satisfaction - Deloitte insights on digital maturity and financial performance.

There’s a persistent misconception in insurance data strategy discussions: that better technology alone will fix fragmented data. It won’t. What’s changed between 2021 and 2026 is not just tooling, it’s the stakes.

Carriers are no longer digitizing for efficiency; they’re doing it to remain competitive in a market where underwriting, pricing, claims, and distribution are increasingly data-contingent in real time. Recent 2025 research from McKinsey & Company shows that insurers using advanced data and AI in core operations are already seeing loss ratio improvements and materially faster claims cycle times. Meanwhile, Deloitte notes that digital leaders in financial services are pulling away in both customer retention and operating margin.

That gap is not about who has more data. It’s about who can trust it, access it, and act on it without friction.

This is where the distinction between Master Data Management (MDM) and Data Integration stops being academic and starts becoming operational.

What is the difference between master data integration and master data management in insurance?

Imagine the insurance industry as a bustling city, with data flowing through its streets like streams of cars during rush hour. In this cityscape, two essential concepts, "master data integration" and "master data management," act as traffic controllers, each playing a vital role in keeping the data traffic flowing smoothly.

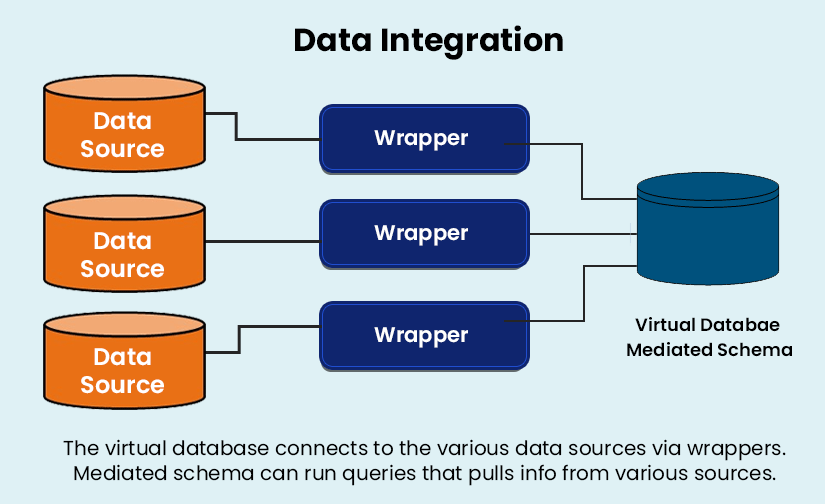

First, let's talk about master data integration: Picture this as the intricate network of roads and highways connecting different neighborhoods within the city. Just as these roads bring together people from different parts of the city, master data integration stitches together data from various corners of the insurance company. Whether it's customer details, policy information, or agent records, master data integration ensures that all this data converges seamlessly into a central hub, eliminating the chaos of data gridlock.

- The main objective of master data integration is to ensure that data from different systems or databases can be brought together seamlessly to create a unified view of key entities.

- This involves tasks such as data cleansing, transformation, and loading (ETL) to ensure that the data is consistent and accurate across different systems.

Now, onto master data management: Imagine this as the city's urban planners and architects working tirelessly behind the scenes to design the infrastructure that supports the city's growth. Master data management is like crafting the blueprint for this data-driven city, setting the rules, standards, and guidelines that govern how data flows and is utilized across the insurance landscape. It's about building sturdy bridges of data governance, erecting skyscrapers of data quality, and laying down solid foundations of data consistency. Just as a well-planned city attracts residents and businesses, effective master data management attracts trust and reliability in the insurance realm.

So, in essence, master data management and integration ensures that data from different sources can mingle freely, while master data management provides the overarching structure and governance to keep this data ecosystem thriving.

Taming data challenges in the insurance sector

The increasing digital interactions with customers are generating new types of data and most of it is unstructured. To put this into perspective, the Internet of Things is resulting in the creation of 2.5 quintillion bytes of data every day.

Gartner reports that over 80% of enterprise data is unstructured, making traditional MDM approaches insufficient on their own.

IoT insurance data is on its own a massive repository of information that will find increasing usage to improve risk assessment, claims leakage, and product pricing. (Also Read: 5 Examples of IoT in the Insurance Industry Powering America)

This complex treasure of Big Data is worthless if it is scattered across different systems without one consolidated view. Any data management system is worth its investment only if it is an effective interface between the database it controls and the applications that access it. This includes providing clean analytical information to drive operational decision-making.

More often than not, each insurance product in a company may have its own methodology to capture and store data about customers. Customers across product lines are rarely recognized as a single entity and that leads to not being able to leverage customer value.

According to a KPMG report, 56% of CEOs have concerns when it comes to the integrity of their data. Building confidence requires a unified data strategy and there are two routes to remove the opaqueness of diverse data silos.

-

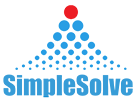

Master Data Management: It is a complete software system that centralizes the data to one master copy which is then synchronized to all applications.

-

Data Integration Layer: Unlike MDM, this process combines data, that remains in various source locations, for a unified view.

Also Read: What it Takes to Reduce Technical Debt in Insurance Systems?

How Master Data Management and Integration Have Changed Since 2021 to Now

In 2021, the core objective for most insurers was straightforward: bring data together either by consolidating it through Master Data Management (MDM) or connecting it through integration. to create a unified view of the business.

That objective hasn’t disappeared, but it’s no longer sufficient.

Five years later, unifying data is no longer the end goal but the starting point. The real challenge is making that data usable in practice, whether for AI models, advanced analytics, or real-time decisions. If teams can’t easily access, understand, and act on it, a “single view” delivers very little business value.

What’s emerging instead is a more product-oriented approach to data.

Leading organizations are no longer building pipelines alone; they are building data products—curated, governed, and reusable datasets designed for specific business outcomes. These data products are expected to be:

-

Discoverable, so teams across underwriting, claims, and distribution can find and trust them

-

Contextual, with clear definitions, lineage, and business meaning attached

-

Consumable, meaning they can be directly used by analytics tools, AI models, and operational systems without heavy rework

This shift is being reinforced by industry research. Gartner identified “highly consumable data products” as a defining trend in modern data and analytics strategies, reflecting the growing need to move beyond data availability toward data usability.

For insurers, this means that the success of MDM and data integration is no longer measured by whether a unified view exists but by whether that data can drive decisions, automate processes, and power AI at scale.

The technology allows insurance systems to cover a single domain or multi-domain. A multi-domain data management platform will support different data assets, such as Customer Data Management and Product Information Management.

The disadvantages of a Master Data Management (MDM)

It is very complex and requires the involvement of too many stakeholders. Due to the complexity, the budgets for MDM implementation are too costly to be easily justified.

MDM finds it almost impossible to reconcile externally sourced data, machine-generated or unstructured data. That puts it at a decided disadvantage because the insurance sector is moving towards IoT and third-party data in its digital transformation journey. The final clincher is that MDM may end in creating another data silo in the business ecosystem.

Also Read: The Importance Of Aligning Business and IT Workflow

A Data Integration Layer

Data silos need not be the devil they are made out to be. Data silos in insurance allow carriers to comply with different state regulations. Additionally, some data MUST be stored separately to comply with legal regulations. Data might also be too valuable in specific business operations to permit it to be consolidated or normalized to suit MDM data rules. This is why many insurtech companies advocate a data integration platform to create a unified view.

A data integration layer is not a single solution like MDM. It is an architecture design that operates at the compute layer and can therefore connect data wherever it resides - in the cloud or on-premise. Above the compute layer, sits the EKG Layer (Enterprise Knowledge Graph), this layer uses a semantic graph that maps entities, their metadata as well as the relationships between them. This involves a data virtualization approach to connect to different physical data stores. By creating a virtualized view of the underlying physical environment it does away with the need to physically move the data as in the case of an MDM system.

An insurance ecosystem can run up to 500 applications and this number will continue to grow. The point made here is that most of these applications cannot easily communicate with each other. It is not feasible to eliminate silos through data migration and consolidation. The more practical way to approach the communication hurdle is by an integration layer that prevents the creation of copies of the data. Data integration platforms can also enable cleansing and monitoring data so that it can comply with data governance rules.

The biggest benefit of data integration layers is that they can bring into their ambit, data that powers digital transformation. As long as insurers are dealing only with structured information, a database management system will work acceptably in an enterprise data landscape. That landscape though is already changing, the increasing relevance of external data sources, the emergence of IoT, and the shift to multi-cloud environments necessitate a more responsive data strategy. Data integration platforms like SimpleINSPIRE, combine the flexibility of the cloud along with computational power to deliver data and analytics to the frontline.

Topics: System Architecture

.jpg)